5850f19cab14def45dc8585ba39dda20168fcb6c

Reviewed-on: #6

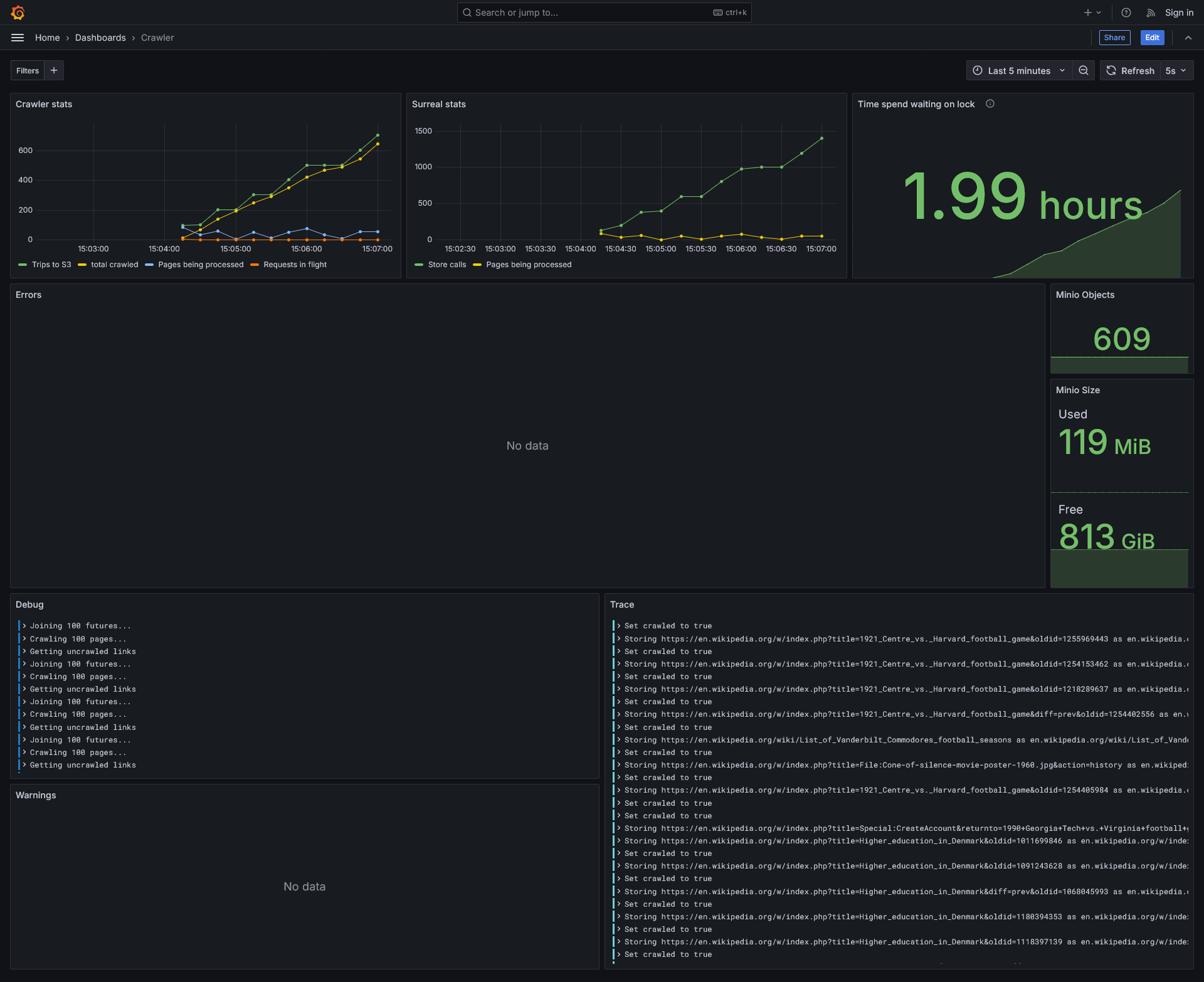

Surreal Crawler

Crawls sites saving all the found links to a surrealdb database. It then proceeds to take batches of 100 uncrawled links untill the crawl budget is reached. It saves the data of each site in a minio database.

How to use

- Clone the repo and

cdinto it. - Build the repo with

cargo build -r - Start the docker conatiners

- cd into the docker folder

cd docker - Bring up the docker containers

docker compose up -d

- cd into the docker folder

- From the project's root, edit the

Crawler.tomlfile to your liking. - Run with

./target/release/internet_mapper

You can view stats of the project at http://<your-ip>:3000/dashboards

# Untested script but probably works

git clone https://git.oliveratkinson.net/Oliver/internet_mapper.git

cd internet_mapper

cargo build -r

cd docker

docker compose up -d

cd ..

$EDITOR Crawler.toml

./target/release/internet_mapper

TODO

- Domain filtering - prevent the crawler from going on alternate versions of wikipedia.

- Conditionally save content - based on filename or file contents

- GUI / TUI ? - Graphana

- Better asynchronous getting of the sites. Currently it all happens serially.

- Allow for storing asynchronously - dropping the "links to" logic fixes this need

- Control crawler via config file (no recompliation needed)

3/17/25: Took >1hr to crawl 100 pages

3/19/25: Took 20min to crawl 1000 pages This ment we stored 1000 pages, 142,997 urls, and 1,425,798 links between the two.

3/20/25: Took 5min to crawl 1000 pages

3/21/25: Took 3min to crawl 1000 pages

About

Description

Languages

Rust

97.8%

HTML

2%

CSS

0.1%